FileFusion: The Go-Powered CLI for Smart File Concatenation and LLM Optimization

👋 Hi there! I'm a seasoned developer with over a decade of hands-on experience. My journey in the tech world has been fueled by a keen interest in exploring diverse programming languages and frameworks. While Java remains my stronghold, I've also ventured into the realms of JavaScript and Golang, finding each to offer unique perspectives and challenges. 🚀 As a developer, I thrive in the backend, where I love to delve deep into the intricacies of server-side programming. However, my curiosity doesn't stop there! I have a passion for experimenting with various frontend frameworks. Svelte, Angular, and Vue have been my playgrounds of choice, allowing me to craft engaging and dynamic user experiences. It's worth noting, though, that React and I have never crossed paths — and that's a conscious choice. I believe in focusing on the tools and technologies that resonate with my style and goals, and React just doesn't fit into that picture. 💡 Beyond the code, I'm an avid learner and a collaborator at heart. I enjoy sharing insights, engaging in tech discussions, and contributing to a vibrant, knowledge-rich community. 🌱 Looking forward, I'm excited to continue my exploration of the tech landscape, always seeking new challenges and opportunities to grow. Let's connect and share our journeys in this ever-evolving world of technology!

I want to learn Go, and what better way to do it than building a couple of projects. Let’s start easy, with a CLI that does some parallel processing?" That was the thought that sparked FileFusion. Well, that and the realization that my LLM is performing way better when it has access to some of your code.

Presenting FileFusion: https://github.com/drgsn/filefusion - a powerful file concatenation tool designed specifically for Large Language Model.

The Problem: Context Management for LLMs

Let's face it: working with LLMs is like trying to explain your code to a brilliant but extremely literal-minded colleague who hasn't had their morning coffee yet. They need context. Lots of context. And while IDE plugins are great, they sometimes fall short when you need comprehensive codebase understanding.

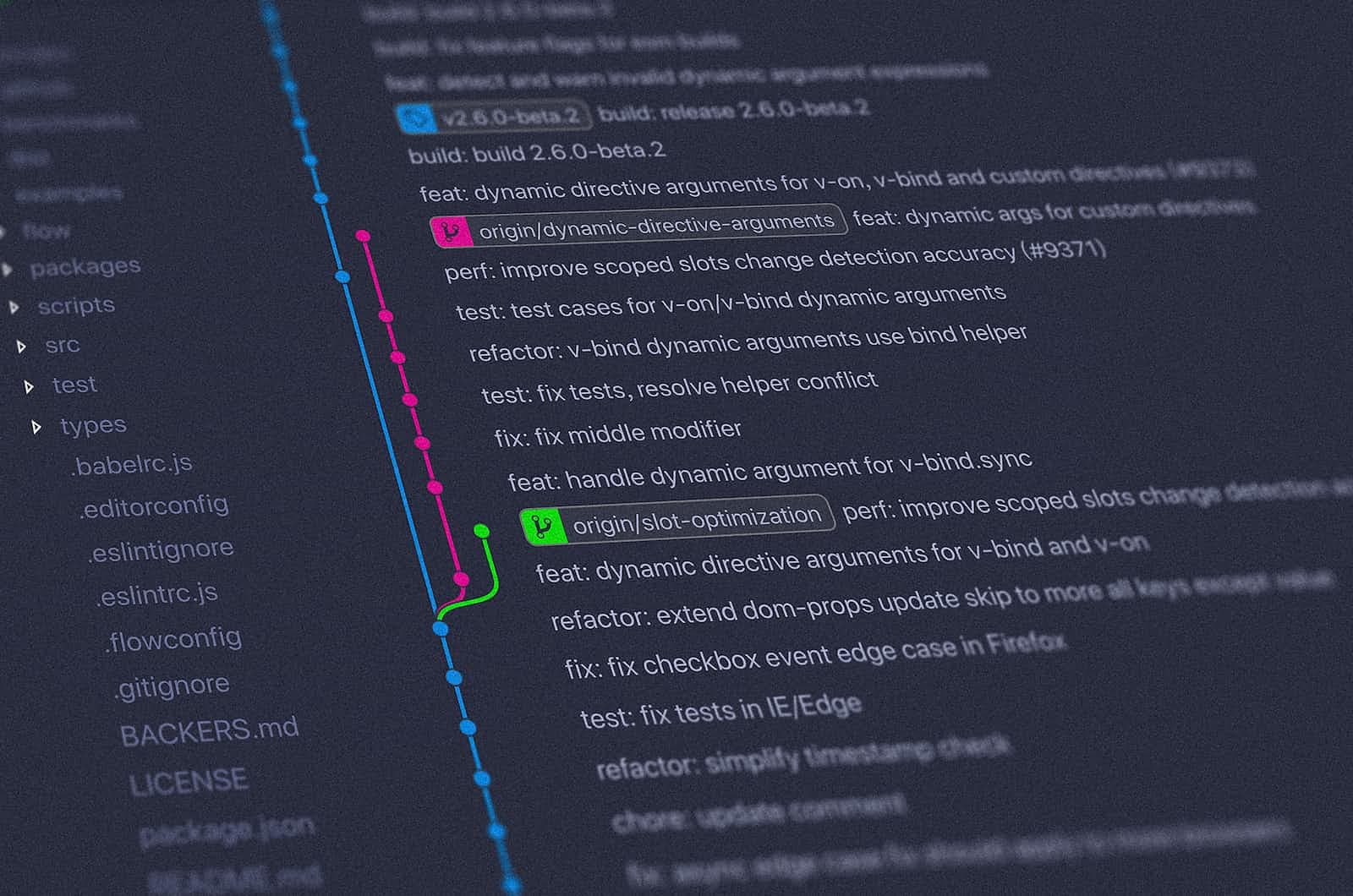

I found that using the project features in Claude and ChatGPT – where you can upload chunks of codebases – often yielded the best results. But there was a catch (isn't there always?): managing what code goes into your LLM context is crucial for getting meaningful responses, and keep it synced with the changes from git is a pain.

That's where FileFusion comes in, like a helpful barista serving up perfectly filtered code to our caffeine-deprived LLM friend.

How LLMs "Understand" Code

Before diving into FileFusion's features, let's talk about how LLMs process code. You might think they read code like we do, but they're more like that friend who memorized the entire dictionary but still occasionally uses words in hilariously wrong contexts.

LLMs don't really "understand" code in the way human programmers do. Instead, they:

Process Code as Tokens: When you feed code to an LLM, it breaks it down into tokens - small chunks of text. For example, the line

function calculateTotal()might be broken into tokens like["function", "calculate", "Total", "(", ")"].Look for Patterns: LLMs have been trained on massive amounts of code, so they recognize patterns. They're like that senior developer who's seen so many codebases they can spot a design pattern from a mile away – except the LLM has seen millions of codebases.

Use Statistical Relationships: The model understands relationships between different parts of code based on statistical patterns in its training data. It's similar to how you might guess what a function does based on its name and context, except the LLM is doing this based on probabilities learned from its training data.

Context is Everything: LLMs rely heavily on context to generate meaningful responses. The more relevant context they have, the better they can understand and respond to questions about the code. It's like trying to debug a function – the more context you have about where and how it's used, the better you can understand what it's supposed to do.

This is why cleaning and optimizing code for LLM consumption is so important. Comments might be helpful for humans, but they can actually confuse LLMs by adding noise to the statistical patterns they're looking for. Similarly, repetitive boilerplate code like getters and setters can dilute the meaningful patterns in the code.

Smaller context is better context

TDD for the win

Smart Pattern Matching with Glob Patterns

Now, let's talk about how FileFusion helps you select exactly what code to feed your LLM friend. It uses glob patterns, which are like regular expressions' more approachable cousin. You know, the one that doesn't make you question your life choices every time you use it.

# Process only Python and JavaScript files

filefusion --pattern "*.py,*.js" /path/to/project

# Exclude test files and vendor directories

filefusion -e "**/*_test.go,vendor/**" /path/to/project

# Complex pattern matching (for when you're feeling adventurous)

filefusion -p "src/**/{test,main}/*.{js,ts}" -e "**/node_modules/**"

The pattern matching is powered by the doublestar library, which is like filepath.Match after it went to the gym and got some serious upgrades:

**for recursive directory matching (because life's too short to list every subdirectory)Brace expansion for alternatives, because you don’t want to type the same pattern with different extensions

Multiple patterns support (because sometimes one pattern just isn't enough)

Negative patterns with

!(for when you need to tell your build artifacts to kindly see themselves out)

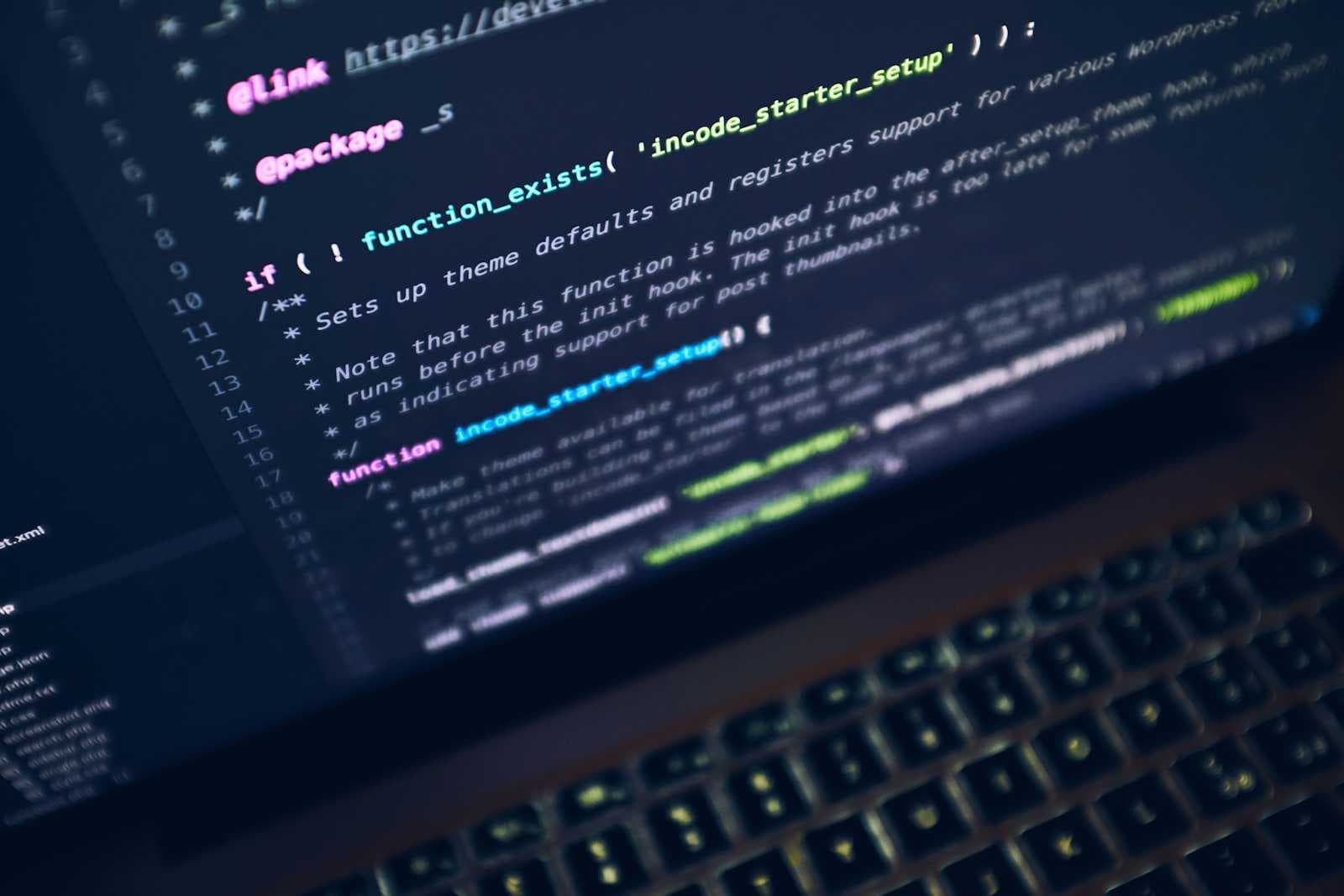

The Cleaning Engine: Tree-sitter Integration

Now for the really cool part: FileFusion uses tree-sitter to perform language-aware code optimization. If you're not familiar with tree-sitter, think of it as a really smart code parser that turns your code into a structured tree faster than you can say "abstract syntax tree" three times fast.

Here's how FileFusion puts tree-sitter to work:

- Language Detection: First, we figure out what language we're dealing with (because trying to parse Python like it's Java would be... interesting):

func (p *FileProcessor) detectLanguage(path string) cleaner.Language {

ext := strings.ToLower(filepath.Ext(path))

switch ext {

case ".go":

return cleaner.LangGo

case ".java":

return cleaner.LangJava

// ... more languages (because we're inclusive like that)

}

return ""

}

- Syntax Tree Analysis: The code gets parsed into a tree structure, kind of like a family tree for your code, but with less dramatic holiday dinners:

tree := parser.Parse(nil, input)

root := tree.RootNode()

// Now we can traverse the tree and identify specific nodes

if node.Type() == "comment" {

// Handle comments (or in some cases, show them the door)

}

Smart Cleaning: The cleaning engine can identify and remove:

Comments (while preserving documentation if desired)

Getter/setter methods (because even LLMs don't need to see

getX()returningxfor the millionth time)Logging statements (sorry, debug logs, it's not you, it's us)

Import statements (when they're not needed)

Empty lines and excess whitespace

Concurrent Processing for Performance

FileFusion processes files concurrently using Go's goroutines and channels, because I wanted to use goroutines. I’ve insisted on making my life hard, even though sequencial processing would’ve been probably fast enough:

func (p *FileProcessor) ProcessFiles(paths []string) ([]FileContent, error) {

numWorkers := min(len(paths), 10) // Because 11 would be showing off

results := make(chan FileResult, len(paths))

jobs := make(chan string, len(paths))

// Start our worker pool (like a pool party, but for goroutines)

var wg sync.WaitGroup

for i := 0; i < numWorkers; i++ {

wg.Add(1)

go func() {

defer wg.Done()

for path := range jobs {

result := p.processFile(path)

results <- result

}

}()

}

// The rest of the concurrent processing magic...

}

Learning Go: Challenges and Insights

Building FileFusion was an excellent way to learn Go, though at times it felt like trying to learn juggling by starting with chainsaws. Some of the "fun" challenges included:

Goroutine Management: Ensuring proper synchronization and preventing goroutine leaks. It turns out "let them all run wild" is not a valid concurrent programming strategy.

Error Handling: Getting used to Go's error handling patterns. Yes, you have to check every error. No, crossing your fingers isn't an acceptable error handling strategy.

File System Operations: Working with filesystem operations concurrently required careful handling of race conditions. Because having multiple goroutines trying to access the same file is like having multiple people try to go through a revolving door at once – chaos ensues.

Memory Management: Processing large files efficiently meant being mindful of memory usage. But if your code files are that big, then that’s on you, i don’t think i’ll optimize for it.

Conclusion

FileFusion started as a "simple" learning project and turned into a full-fledged tool that makes preparing code for LLMs a breeze. While there were certainly moments where I questioned my life choices (particularly during concurrent file processing debugging sessions), the end result is something I'm proud of.

The tool fills a specific niche in the LLM workflow, helping developers prepare their code for LLM consumption by cleaning out unnecessary elements while preserving essential structure.

Future enhancements might additional cleaning options, and perhaps integration with popular LLM platforms to automate the process of creating and updating projects. The project remains open source and welcomes contributions from the community. Just please don't submit pull requests written fully by an LLM without proper testing.